| Parameter |

Description |

Type |

Options |

Default Value |

input |

Data to be predicted, supports multiple input types |

Python Var/str/list |

- Python Variable, such as image data represented by

numpy.ndarray

- File Path, such as the local path of an image file:

/root/data/img.jpg

- URL Link, such as the network URL of an image file: Example

- Local Directory, the directory should contain data files to be predicted, such as the local path:

/root/data/

- List, elements of the list should be data of the above types, such as

[numpy.ndarray, numpy.ndarray], [\"/root/data/img1.jpg\", \"/root/data/img2.jpg\"], [\"/root/data1\", \"/root/data2\"]

|

None |

batch_size |

Batch size |

int |

Any integer |

1 |

target_size |

Image size during inference (W, H) |

int/tuple |

- -1, indicating inference using the original image size

- None, indicating the settings from the lower priority are used. The priority order for parameter settings is:

predict parameter > create_model initialization > yaml configuration file

- int, such as 512, indicating inference using a resolution of

(512, 512)

- tuple, such as (512, 1024), indicating inference using a resolution of

(512, 1024)

|

None |

* The prediction results are processed as `dict` type for each sample, and support operations such as printing, saving as an image, and saving as a `json` file:

| Method |

Description |

Parameter |

Parameter Type |

Parameter Description |

Default Value |

print() |

Print the result to the terminal |

format_json |

bool |

Whether to format the output content with JSON indentation |

True |

indent |

int |

Specify the indentation level to beautify the output JSON data, making it more readable. This is only effective when format_json is True |

4 |

ensure_ascii |

bool |

Control whether to escape non-ASCII characters to Unicode. When set to True, all non-ASCII characters will be escaped; False retains the original characters. This is only effective when format_json is True |

False |

save_to_json() |

Save the result as a file in json format |

save_path |

str |

The file path for saving. When it is a directory, the saved file name will match the input file name |

None |

indent |

int |

Specify the indentation level to beautify the output JSON data, making it more readable. This is only effective when format_json is True |

4 |

ensure_ascii |

bool |

Control whether to escape non-ASCII characters to Unicode. When set to True, all non-ASCII characters will be escaped; False retains the original characters. This is only effective when format_json is True |

False |

save_to_img() |

Save the result as a file in image format |

save_path |

str |

The file path for saving. When it is a directory, the saved file name will match the input file name |

None |

* Additionally, it also supports obtaining the visualization image with results and the prediction results through attributes, as follows:

👉 Verification Result Details (click to expand)

The specific content of the verification result file is:

{

"done_flag": true,

"check_pass": true,

"attributes": {

"train_sample_paths": [

"check_dataset/demo_img/P0005.jpg",

"check_dataset/demo_img/P0050.jpg"

],

"train_samples": 267,

"val_sample_paths": [

"check_dataset/demo_img/N0139.jpg",

"check_dataset/demo_img/P0137.jpg"

],

"val_samples": 76,

"num_classes": 2

},

"analysis": {

"histogram": "check_dataset/histogram.png"

},

"dataset_path": "seg_optic_examples",

"show_type": "image",

"dataset_type": "SegDataset"

}

The verification results above indicate that check_pass being True means the dataset format meets the requirements. Explanations for other indicators are as follows:

attributes.num_classes: The number of classes in this dataset is 2;attributes.train_samples: The number of training samples in this dataset is 267;attributes.val_samples: The number of validation samples in this dataset is 76;attributes.train_sample_paths: A list of relative paths to the visualization images of training samples in this dataset;attributes.val_sample_paths: A list of relative paths to the visualization images of validation samples in this dataset;

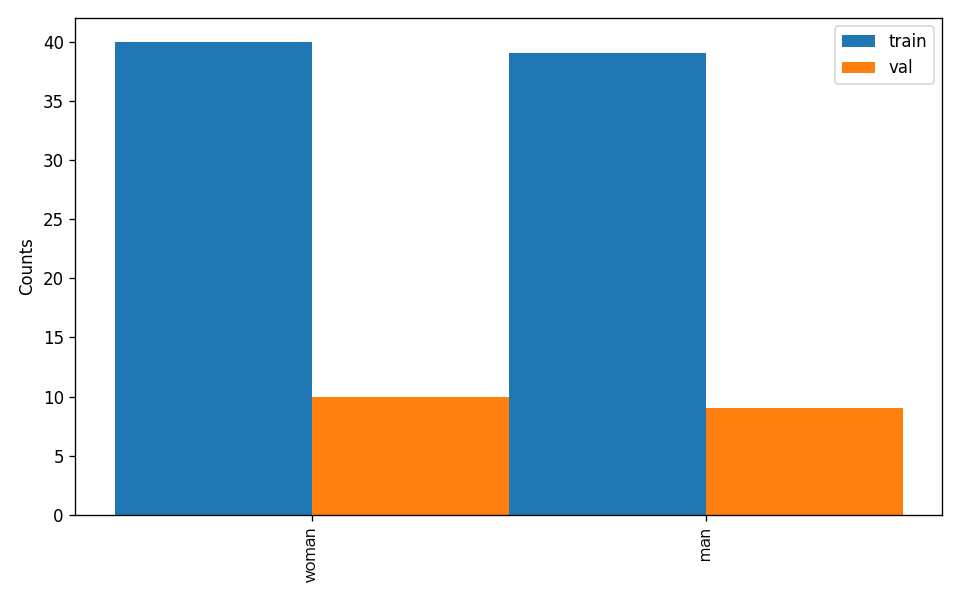

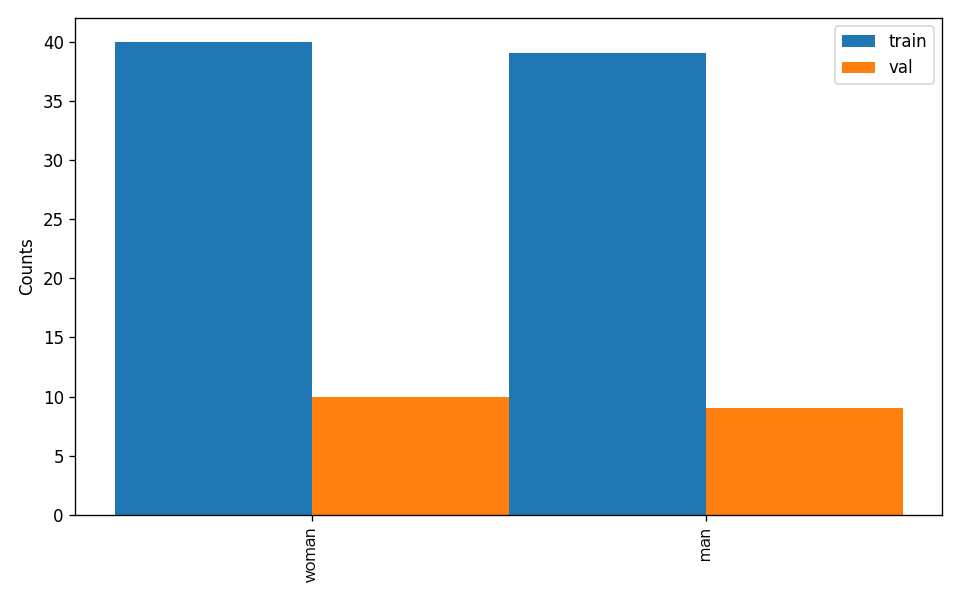

The dataset verification also analyzes the distribution of sample numbers across all classes and plots a histogram (histogram.png):

👉 Details on Format Conversion/Dataset Splitting (Click to Expand)

After completing dataset verification, you can convert the dataset format or re-split the training/validation ratio by modifying the configuration file or appending hyperparameters.

(1) Dataset Format Conversion

Semantic segmentation supports converting LabelMe format datasets to the required format.

Parameters related to dataset verification can be set by modifying the CheckDataset fields in the configuration file. Example explanations for some parameters in the configuration file are as follows:

CheckDataset:convert:enable: Whether to enable dataset format conversion, supporting LabelMe format conversion, default is False;src_dataset_type: If dataset format conversion is enabled, the source dataset format needs to be set, default is null, and the supported source dataset format is LabelMe;

For example, if you want to convert a LabelMe format dataset, you can download a sample LabelMe format dataset as follows:

wget https://paddle-model-ecology.bj.bcebos.com/paddlex/data/seg_dataset_to_convert.tar -P ./dataset

tar -xf ./dataset/seg_dataset_to_convert.tar -C ./dataset/

After downloading, modify the paddlex/configs/modules/semantic_segmentation/PP-LiteSeg-T.yaml configuration as follows:

......

CheckDataset:

......

convert:

enable: True

src_dataset_type: LabelMe

......

Then execute the command:

python main.py -c paddlex/configs/modules/semantic_segmentation/PP-LiteSeg-T.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/seg_dataset_to_convert

Of course, the above parameters also support being set by appending command-line arguments. For a LabelMe format dataset, the command is:

python main.py -c paddlex/configs/modules/semantic_segmentation/PP-LiteSeg-T.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/seg_dataset_to_convert \

-o CheckDataset.convert.enable=True \

-o CheckDataset.convert.src_dataset_type=LabelMe

(2) Dataset Splitting

Parameters for dataset splitting can be set by modifying the CheckDataset fields in the configuration file. Example explanations for some parameters in the configuration file are as follows:

CheckDataset:split:enable: Whether to enable re-splitting the dataset, set to True to perform dataset splitting, default is False;train_percent: If re-splitting the dataset, set the percentage of the training set, which should be an integer between 0 and 100, ensuring the sum with val_percent is 100;

For example, if you want to re-split the dataset with a 90% training set and a 10% validation set, modify the configuration file as follows:

......

CheckDataset:

......

split:

enable: True

train_percent: 90

val_percent: 10

......

Then execute the command:

python main.py -c paddlex/configs/modules/semantic_segmentation/PP-LiteSeg-T.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/seg_optic_examples

After dataset splitting, the original annotation files will be renamed to xxx.bak in the original path.

The above parameters also support setting through appending command line arguments:

python main.py -c paddlex/configs/modules/semantic_segmentation/PP-LiteSeg-T.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/seg_optic_examples \

-o CheckDataset.split.enable=True \

-o CheckDataset.split.train_percent=90 \

-o CheckDataset.split.val_percent=10

Note: The image link may not be accessible due to network issues or problems with the link itself. If you need to access the image, please check the validity of the link and try again.

Related methods, parameters, and explanations are as follows:

* The `create_model` method instantiates a general semantic segmentation model (here using `PP-LiteSeg-T` as an example), with specific explanations as follows:

Note: The image link may not be accessible due to network issues or problems with the link itself. If you need to access the image, please check the validity of the link and try again.

Related methods, parameters, and explanations are as follows:

* The `create_model` method instantiates a general semantic segmentation model (here using `PP-LiteSeg-T` as an example), with specific explanations as follows: