pedestrian_attribute_recognition.en.md 29 KB

comments: true

Pedestrian Attribute Recognition Pipeline Tutorial

1. Introduction to Pedestrian Attribute Recognition Pipeline

Pedestrian attribute recognition is a key function in computer vision systems, used to locate and label specific characteristics of pedestrians in images or videos, such as gender, age, clothing color, and style. This task not only requires accurately detecting pedestrians but also identifying detailed attribute information for each pedestrian. The pedestrian attribute recognition pipeline is an end-to-end serial system for locating and recognizing pedestrian attributes, widely used in smart cities, security surveillance, and other fields, significantly enhancing the system's intelligence level and management efficiency.This production line also offers a flexible service-oriented deployment approach, supporting the use of multiple programming languages on various hardware platforms. Moreover, this production line provides the capability for secondary development. You can train and optimize models on your own dataset based on this production line, and the trained models can be seamlessly integrated.

The pedestrian attribute recognition pipeline includes a pedestrian detection module and a pedestrian attribute recognition module, with several models in each module. Which models to use specifically can be selected based on the benchmark data below. If you prioritize model accuracy, choose models with higher accuracy; if you prioritize inference speed, choose models with faster inference; if you prioritize model storage size, choose models with smaller storage.

The pedestrian attribute recognition pipeline includes a pedestrian detection module and a pedestrian attribute recognition module, with several models in each module. Which models to use specifically can be selected based on the benchmark data below. If you prioritize model accuracy, choose models with higher accuracy; if you prioritize inference speed, choose models with faster inference; if you prioritize model storage size, choose models with smaller storage.

Pedestrian Detection Module:

| Model | Model Download Link | mAP(0.5:0.95) | mAP(0.5) | CPU Inference Time (ms) [Normal Mode / High-Performance Mode] |

CPU Inference Time (ms) [Normal Mode / High-Performance Mode] |

Model Size (M) | Description |

|---|---|---|---|---|---|---|---|

| PP-YOLOE-L_human | Inference Model/Trained Model | 48.0 | 81.9 | 33.27 / 9.19 | 173.72 / 173.72 | 196.02 | Pedestrian detection model based on PP-YOLOE |

| PP-YOLOE-S_human | Inference Model/Trained Model | 42.5 | 77.9 | 9.94 / 3.42 | 54.48 / 46.52 | 28.79 |

Note: The above accuracy metrics are mAP(0.5:0.95) on the CrowdHuman dataset. All model GPU inference times are based on an NVIDIA Tesla T4 machine with FP32 precision. CPU inference speeds are based on an Intel(R) Xeon(R) Gold 5117 CPU @ 2.00GHz with 8 threads and FP32 precision.

Pedestrian Attribute Recognition Module:

| Model | Model Download Link | mAP (%) | CPU Inference Time (ms) [Normal Mode / High-Performance Mode] |

CPU Inference Time (ms) [Normal Mode / High-Performance Mode] |

Model Size (M) | Description |

|---|---|---|---|---|---|---|

| PP-LCNet_x1_0_pedestrian_attribute | Inference Model/Trained Model | 92.2 | 2.35 / 0.49 | 3.17 / 1.25 | 6.7 M | PP-LCNet_x1_0_pedestrian_attribute is a lightweight pedestrian attribute recognition model based on PP-LCNet, covering 26 categories. |

Note: The above accuracy metrics are mA on PaddleX's internally built dataset. GPU inference times are based on an NVIDIA Tesla T4 machine with FP32 precision. CPU inference speeds are based on an Intel(R) Xeon(R) Gold 5117 CPU @ 2.00GHz with 8 threads and FP32 precision.

2. Quick Start

The model pipelines provided by PaddleX can be experienced locally using the command line or Python for pedestrian attribute recognition.

2.1 Online Experience

Online experience is not currently supported.

2.2 Local Experience

Before using the pedestrian attribute recognition pipeline locally, please ensure that you have completed the installation of the PaddleX wheel package according to the PaddleX Local Installation Guide.

2.2.1 Command Line Experience

You can quickly experience the pedestrian attribute recognition pipeline with a single command. Use the test image and replace --input with your local path for prediction.

paddlex --pipeline pedestrian_attribute_recognition --input pedestrian_attribute_002.jpg --device gpu:0 --save_path ./output/

The relevant parameter descriptions can be found in the parameter explanation section of 2.2.2 Python Script Integration.

After running, the result will be printed to the terminal, as shown below:

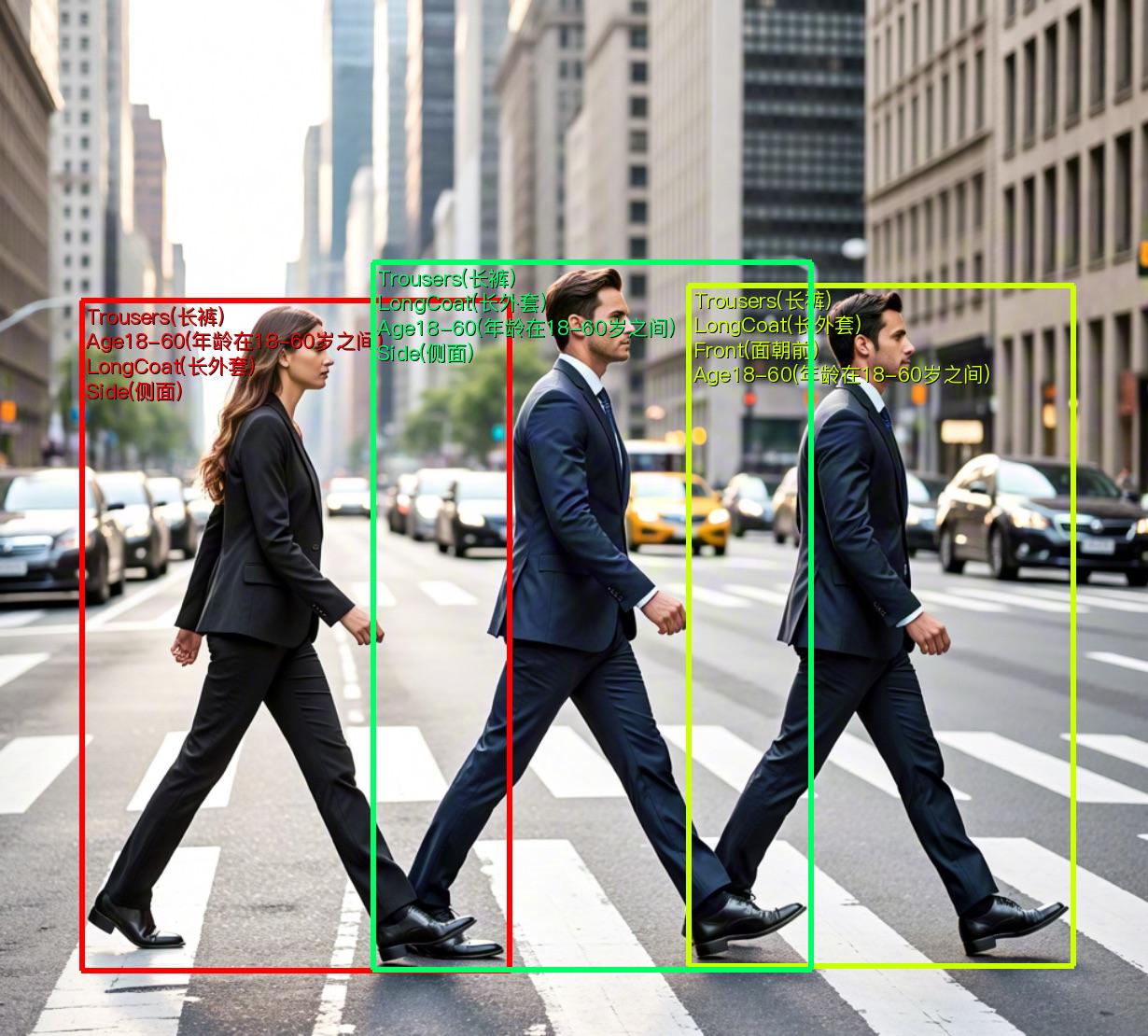

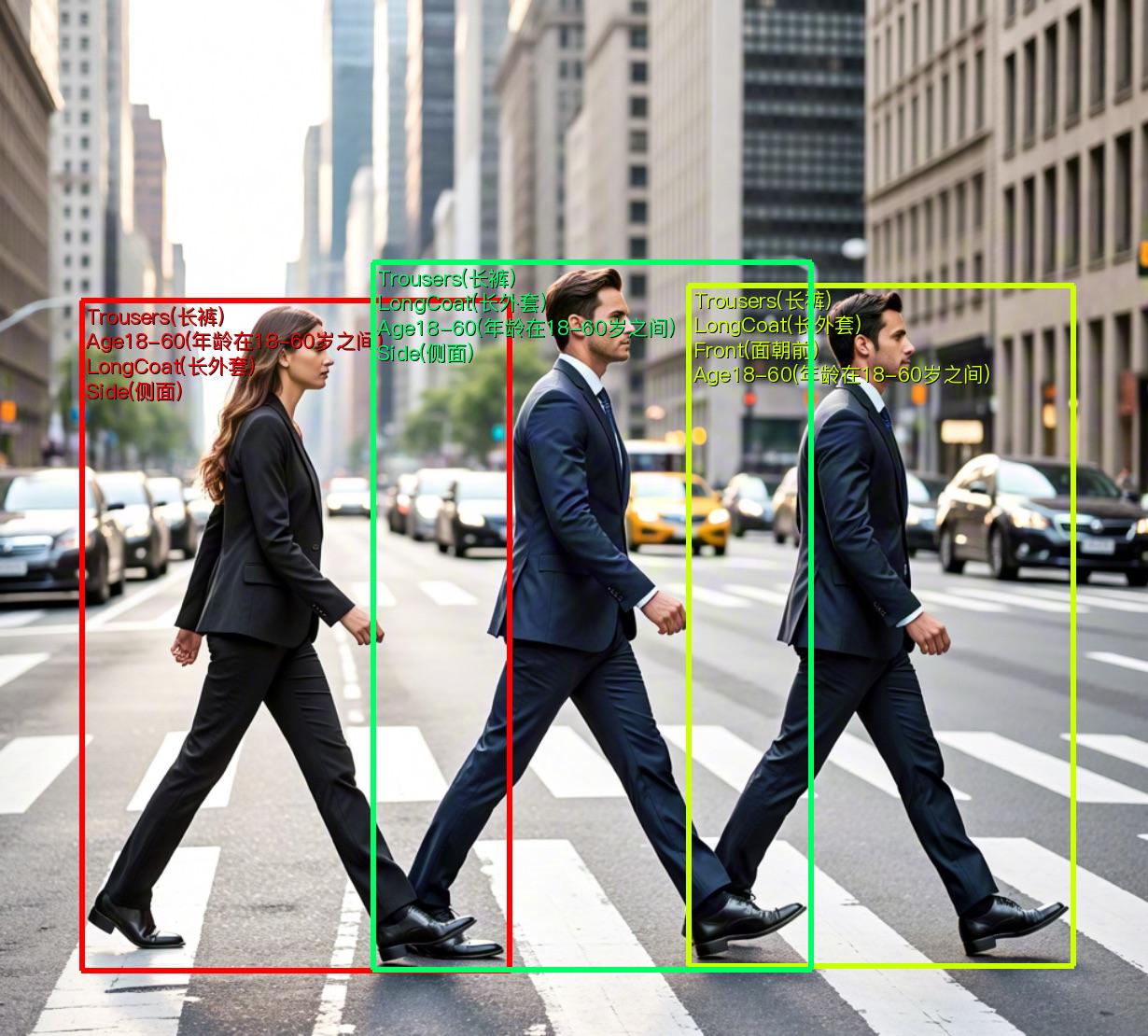

{'res': {'input_path': 'pedestrian_attribute_002.jpg', 'boxes': [{'labels': ['Trousers(长裤)', 'Age18-60(年龄在18-60岁之间)', 'LongCoat(长外套)', 'Side(侧面)'], 'cls_scores': array([0.99965, 0.99963, 0.98866, 0.9624 ]), 'det_score': 0.9795178771018982, 'coordinate': [87.24581, 322.5872, 546.2697, 1039.9852]}, {'labels': ['Trousers(长裤)', 'LongCoat(长外套)', 'Front(面朝前)', 'Age18-60(年龄在18-60岁之间)'], 'cls_scores': array([0.99996, 0.99872, 0.93379, 0.71614]), 'det_score': 0.967143177986145, 'coordinate': [737.91626, 306.287, 1150.5961, 1034.2979]}, {'labels': ['Trousers(长裤)', 'LongCoat(长外套)', 'Age18-60(年龄在18-60岁之间)', 'Side(侧面)'], 'cls_scores': array([0.99996, 0.99514, 0.98726, 0.96224]), 'det_score': 0.9645745754241943, 'coordinate': [399.45944, 281.9107, 869.5312, 1038.9962]}]}}

For the explanation of the running result parameters, you can refer to the result interpretation in Section 2.2.2 Integration via Python Script.

The visualization results are saved under save_path, and the visualization result is as follows:

2.2.2 Integration via Python Script

The above command line is for quick experience and viewing of results. Generally, in projects, integration through code is often required. You can complete the pipeline's fast inference with just a few lines of code. The inference code is as follows:

from paddlex import create_pipeline pipeline = create_pipeline(pipeline="pedestrian_attribute_recognition") output = pipeline.predict("pedestrian_attribute_002.jpg") for res in output: res.print() res.save_to_img("./output/") res.save_to_json("./output/")

The results obtained are the same as those from the command line method.

In the above Python script, the following steps are executed:

(1) The pedestrian attribute recognition production line object is instantiated via create_pipeline(). The specific parameter descriptions are as follows:

| Parameter | Parameter Description | Parameter Type | Default Value |

|---|---|---|---|

pipeline |

The name of the production line or the path to the production line configuration file. If it is the name of a production line, it must be supported by PaddleX. | str |

None |

config |

Specific configuration information for the production line (if set simultaneously with pipeline, it has higher priority than pipeline, and the production line name must be consistent with pipeline). |

dict[str, Any] |

None |

device |

The device used for production line inference. It supports specifying the specific card number of GPUs, such as "gpu:0", other hardware card numbers, such as "npu:0", and CPUs, such as "cpu". | str |

gpu:0 |

use_hpip |

Whether to enable high-performance inference. This is only available if the production line supports high-performance inference. | bool |

False |

(2) The predict() method of the pedestrian attribute recognition production line object is called to perform inference prediction. This method returns a generator. Below are the parameters and their descriptions for the predict() method:

| Parameter | Parameter Description | Parameter Type | Options | Default Value |

|---|---|---|---|---|

input |

The data to be predicted. It supports multiple input types and is required. | Python Var|str|list |

|

None |

device |

The device used for production line inference. | str|None |

|

None |

det_threshold |

Threshold for pedestrian detection visualization. | float | None |

|

0.5 |

cls_threshold |

Threshold for pedestrian attribute prediction. | float | dict | list | None |

|

0.7 |

3) Process the prediction results. Each sample's prediction result is of type dict, and supports operations such as printing, saving as an image, and saving as a json file:

| Method | Description | Parameter | Type | Description | Default Value |

|---|---|---|---|---|---|

print() |

Print the result to the terminal | format_json |

bool |

Whether to format the output content using JSON indentation |

True |

indent |

int |

Specify the indentation level to beautify the output JSON data and make it more readable. This is only effective when format_json is True |

4 | ||

ensure_ascii |

bool |

Control whether to escape non-ASCII characters to Unicode. If set to True, all non-ASCII characters will be escaped; False will retain the original characters. This is only effective when format_json is True |

False |

||

save_to_json() |

Save the result as a JSON file | save_path |

str |

The path to save the file. If it is a directory, the saved file will have the same name as the input file | None |

indent |

int |

Specify the indentation level to beautify the output JSON data and make it more readable. This is only effective when format_json is True |

4 | ||

ensure_ascii |

bool |

Control whether to escape non-ASCII characters to Unicode. If set to True, all non-ASCII characters will be escaped; False will retain the original characters. This is only effective when format_json is True |

False |

||

save_to_img() |

Save the result as an image file | save_path |

str |

The path to save the file, supporting both directory and file paths | None |

Calling the

print()method will print the result to the terminal, and the content printed to the terminal is explained as follows:input_path:(str)The input path of the image to be predicted.page_index:(Union[int, None])If the input is a PDF file, it indicates the current page number of the PDF; otherwise, it isNone.boxes:(List[Dict])Indicates the category ID of the prediction result.labels:(List[str])Indicates the category name of the prediction result.cls_scores:(List[numpy.ndarray])Indicates the confidence of the attribute prediction result.det_scores:(float)Indicates the confidence of the pedestrian detection box.

Calling the

save_to_json()method will save the above content to the specifiedsave_path. If a directory is specified, the saved path will besave_path/{your_img_basename}_res.json. If a file is specified, it will be saved directly to that file. Since JSON files do not support saving numpy arrays, thenumpy.arraytype will be converted to a list format.Calling the

save_to_img()method will save the visualization result to the specifiedsave_path. If a directory is specified, the saved path will besave_path/{your_img_basename}_res.{your_img_extension}. If a file is specified, it will be saved directly to that file. (The production line usually contains many result images, so it is not recommended to specify a specific file path directly, otherwise multiple images will be overwritten, and only the last image will be retained.)Additionally, it also supports obtaining visualized images with results and prediction results through attributes, as follows:

| Attribute | Description |

|---|---|

json |

Get the prediction result in json format |

img |

Get the visualized image in dict format |

- The prediction result obtained through the

jsonattribute is of typedict, and its content is consistent with the result saved by thesave_to_json()method. - The prediction result returned by the

imgattribute is a dictionary. The keyrescorresponds to the value of anImage.Imageobject: a visualized image displaying the attribute recognition result.

Additionally, you can obtain the pedestrian attribute recognition pipeline configuration file and load the configuration file for prediction. You can execute the following command to save the result in my_path:

paddlex --get_pipeline_config pedestrian_attribute_recognition --save_path ./my_path

If you have obtained the configuration file, you can customize the settings for the pedestrian attribute recognition production line by simply modifying the value of the pipeline parameter in the create_pipeline method to the path of the production line configuration file. The example is as follows:

from paddlex import create_pipeline

pipeline = create_pipeline(pipeline="./my_path/pedestrian_attribute_recognition.yaml")

output = pipeline.predict(

input="./pedestrian_attribute_002.jpg",

)

for res in output:

res.print()

res.save_to_img("./output/")

res.save_to_json("./output/")

Note: The parameters in the configuration file are the initialization parameters for the production line. If you wish to change the initialization parameters for the pedestrian attribute recognition production line, you can directly modify the parameters in the configuration file and load the configuration file for prediction. Additionally, CLI prediction also supports passing in a configuration file, simply specify the path to the configuration file with --pipeline.

3. Development Integration/Deployment

If the production line meets your requirements for inference speed and accuracy, you can proceed directly with development integration/deployment.

If you need to integrate the production line directly into your Python project, you can refer to the example code in 2.2.2 Python Script Integration.

In addition, PaddleX also provides three other deployment methods, which are detailed as follows:

🚀 High-Performance Inference: In practical production environments, many applications have strict performance requirements for deployment strategies, especially in terms of response speed, to ensure the efficient operation of the system and a smooth user experience. To this end, PaddleX provides a high-performance inference plugin, which aims to deeply optimize the performance of model inference and pre/post-processing to significantly speed up the end-to-end process. For detailed information on high-performance inference, please refer to the PaddleX High-Performance Inference Guide.

☁️ Service-Oriented Deployment: Service-oriented deployment is a common form of deployment in practical production environments. By encapsulating the inference functionality into a service, clients can access these services via network requests to obtain inference results. PaddleX supports multiple service-oriented deployment solutions for production lines. For detailed information on service-oriented deployment, please refer to the PaddleX Service-Oriented Deployment Guide.

Below are the API references for basic service-oriented deployment and examples of multi-language service calls:

For the main operations provided by the service: The main operations provided by the service are as follows: Get pedestrian attribute recognition results. Each element in Each element in API Reference

200, and the attributes of the response body are as follows:

Name

Type

Description

logIdstringThe UUID of the request.

errorCodeintegerError code. Fixed as

0.

errorMsgstringError message. Fixed as

"Success".

resultobjectThe result of the operation.

Name

Type

Description

logIdstringThe UUID of the request.

errorCodeintegerError code. Same as the response status code.

errorMsgstringError message.

inferPOST /pedestrian-attribute-recognition

Name

Type

Description

Required

imagestringThe URL of an image file accessible by the server or the Base64-encoded content of an image file.

Yes

result in the response body has the following attributes:

Name

Type

Description

pedestriansarrayInformation about the location and attributes of pedestrians.

imagestringThe result image of pedestrian attribute recognition. The image is in JPEG format and is Base64-encoded.

pedestrians is an object with the following attributes:

Name

Type

Description

bboxarrayThe location of the pedestrian. The elements in the array are the x-coordinate of the top-left corner, the y-coordinate of the top-left corner, the x-coordinate of the bottom-right corner, and the y-coordinate of the bottom-right corner of the bounding box.

attributesarrayThe attributes of the pedestrian.

scorenumberThe detection score.

attributes is an object with the following attributes:

Name

Type

Description

labelstringThe attribute label.

scorenumberThe classification score.

Multi-Language Service Call Examples

Python

import base64

import requests

API_URL = "http://localhost:8080/pedestrian-attribute-recognition" # Service URL image_path = "./demo.jpg" output_image_path = "./out.jpg"

Encode the local image using Base64

with open(image_path, "rb") as file:

image_bytes = file.read()

image_data = base64.b64encode(image_bytes).decode("ascii")

payload = {"image": image_data} # Base64-encoded file content or image URL

Call the API

response = requests.post(API_URL, json=payload)

Process the returned data

assert response.status_code == 200 result = response.json()["result"] with open(output_image_path, "wb") as file:

file.write(base64.b64decode(result["image"]))

print(f"Output image saved at {output_image_path}")

print("\nDetected pedestrians:")

print(result["pedestrians"])

📱 Edge Deployment: Edge deployment is a method of placing computing and data processing capabilities directly on user devices, allowing them to process data without relying on remote servers. PaddleX supports deploying models on edge devices such as Android. For detailed instructions, please refer to the PaddleX Edge Deployment Guide. You can choose the appropriate deployment method based on your needs to integrate the model pipeline into your AI applications.

4. Custom Development

If the default model weights provided by the pedestrian attribute recognition pipeline are not satisfactory in terms of accuracy or speed for your specific scenario, you can attempt to further fine-tune the existing models using your own domain-specific or application data to improve the recognition performance of the pipeline in your scenario.

4.1 Model Fine-Tuning

Since the pedestrian attribute recognition pipeline includes both a pedestrian attribute recognition module and a pedestrian detection module, if the pipeline's performance does not meet expectations, the issue may stem from either module. You can analyze the images with poor recognition results to determine which module is problematic and refer to the corresponding fine-tuning tutorial links in the table below for model fine-tuning.

| Scenario | Fine-Tuning Module | Fine-Tuning Reference Link |

|---|---|---|

| Inaccurate pedestrian detection | Pedestrian Detection Module | Link |

| Inaccurate attribute recognition | Pedestrian Attribute Recognition Module | Link |

4.2 Model Application

After you complete fine-tuning with your private dataset, you will obtain a local model weight file.

If you need to use the fine-tuned model weights, simply modify the production line configuration file by replacing the local path of the fine-tuned model weights to the corresponding position in the file:

pipeline_name: pedestrian_attribute_recognition

SubModules:

Detection:

module_name: object_detection

model_name: PP-YOLOE-L_human

model_dir: null # Replace with the path to the fine-tuned pedestrian detection model weights

batch_size: 1

threshold: 0.5

Classification:

module_name: multilabel_classification

model_name: PP-LCNet_x1_0_pedestrian_attribute

model_dir: null # Replace with the path to the fine-tuned pedestrian attribute recognition model weights

batch_size: 1

threshold: 0.7

Subsequently, refer to the command line method or Python script method in the local experience section to load the modified production line configuration file.

5. Multi-Hardware Support

PaddleX supports a variety of mainstream hardware devices, including NVIDIA GPU, Kunlunxin XPU, Ascend NPU, and Cambricon MLU. Simply modify the --device parameter to seamlessly switch between different hardware devices. For example, if you are using Ascend NPU for inference in the pedestrian attribute recognition production line, the Python command you would use is:

paddlex --pipeline pedestrian_attribute_recognition \

--input pedestrian_attribute_002.jpg \

--device npu:0

If you want to use the general Pedestrian Attribute Recognition pipeline on a wider range of hardware devices, please refer to the PaddleX Multi-Hardware Usage Guide.